Journal

“Gil Scott-Heron Saved Me”

From The Guardian in 2011, shortly after Gil Scott-Heron’s sad death, here’s a beautiful and moving account of how the musical legend offered hope and mentorship to a young man from Liverpool and in so doing turned his life around.

Thanks to Nick Peacock for sharing.

“Your interview test for junior developer” (from Bruce Lawson on Twitter)

"Ok, as part of your interview test for junior developer, we want you to put some words, an image and some links onto a webpage. We use Node, Docker, Kubernetes, React, Redux, Puppeteer, Babel, Bootstrap, Webpack,

<div>and<span>. Go!"

Bruce Lawson nicely illustrates how ridiculous many job adverts for web developers are. This (see video) never fails to crack me up. It’s funny ‘cos it’s true!

(via @brucel)

Progressively Enhanced JavaScript with Stimulus

I’m dipping my toes into Stimulus, the JavaScript micro-framework from Basecamp. Here are my initial thoughts.

I immediately like the ethos of Stimulus.

The creators’ take is that in many cases, using one of the popular contemporary JavaScript frameworks is overkill.

We don’t always need a nuclear solution that:

- takes over our whole front end;

- renders entire, otherwise empty pages from JSON data;

- manages state in JavaScript objects or Redux; or

- requires a proprietary templating language.

Instead, Simulus suggests a more “modest” solution – using an existing server-rendered HTML document as its basis (either from the initial HTTP response or from an AJAX call), and then progressively enhancing.

It advocates readable markup – being able to read a fragment of HTML which includes sprinkles of Stimulus and easily understand what’s going on.

And interestingly, Stimulus proposes storing state in the HTML/DOM.

How it works

Stimulus’ technical purpose is to automatically connect DOM elements to JavaScript objects which are implemented via ES6 classes. The connection is made by data– attributes (rather than id or class attributes).

data-controller values connect and disconnect Stimulus controllers.

The key elements are:

- Controllers

- Actions (essentially event handlers) which trigger controller methods

- Targets (elements which we want to read or write to, mapped to controller properties)

Some nice touches

I like the way you can use the connect() method – a lifecycle callback invoked whenever a given controller is connected to the DOM - as a place to test browser support for a given feature before applying a JS-based enhancement.

Stimulus also readily supports the ability to have multiple instances of a controller on the page.

Furthermore, actions and targets can be added to any type of element without the controller JavaScript needing to know or care about the specific element, promoting loose coupling between HTML and JavaScript.

Managing State in Stimulus

Initial state can be read in from our DOM element via a data- attribute, e.g. data-slideshow-index.

Then in our controller object we have access to a this.data API with has(), get(), and set() methods. We can use those methods to set new values back into our DOM attribute, so that state lives entirely in the DOM without the need for a JavaScript state object.

Possible Limitations

Stimulus feels a little restrictive if dealing with less simple elements – say, for example, a data table with lots of rows and columns, each differing in multiple ways.

And if, like in our data table example, that element has lots of child elements, it feels like there might be more of a performance hit to update each one individually rather than replace the contents with new innerHTML in one fell swoop.

Summing Up

I love Stimulus’s modest and progressive enhancement friendly approach. I can see me adopting it as a means of writing modern, modular JavaScript which fits well in a webpack context in situations where the interactive elements are relatively simple and not composed of complex, multidimensional data.

How to manage JavaScript dependencies

Managing a project’s third-party JavaScript dependencies is about as much fun as a poke in the eye. However even if you’re like me and prefer keeping things lean and dependency-free as far as possible, it’s something you’re likely to need to do either in large work projects or as your personal side-project grows. In this post I tackle it head-on to reduce the problem to some simple concepts and practical techniques.

Why do we install JavaScript package dependencies? For many different reasons. Perhaps you want developer tools such as a linter, code formatter or testing library. Or a tool to convert SCSS to CSS, or to minify CSS. Maybe you want a polyfill/ponyfill for an emergent or partially-implemented web standard (such as template-parts) so that you can safely use that feature. Perhaps you’ve decided that some JavaScript library is critical to your application – maybe a static site generator like Eleventy which runs at build-time, or a whole client-side JavaScript application framework like React. Whichever it is, the approach of installing and using some third-party tools can aid development by letting you concentrate on your application’s unique features rather than reinventing the wheel for already-solved common tasks.

In modern applications we can add tried-and-tested open source JavaScript tools, utilities and libraries by installing the relevant package from the NPM registry. We do this using a package manager such as npm or yarn. When you or your collaborators install a dependency, it manifests itself as a module – a file or directory in the node_modules directory. You can then import that module into a server-side JavaScript file to start using it, by using Node.js’s require (for older CommonJS modules) or import (for newer ES modules) functions.

When our package manager installs a package it logs it in the file package.json as a project dependency, which is to say that the project depends upon its presence to function properly. It then follows that anyone who wants to run the application should first install its dependencies.

And it’s the responsibility of the project owner (you and your team) to manage the project’s dependencies over time. This involves:

- updating packages when they release security patches;

- maintaining compatibility by staying on package upgrade paths; and

- removing installed packages when they are no longer necessary for your project.

While it’s important to keep your dependencies updated, in a recent survey by Sonatype 52% of developers said they find dependency management painful. And I have to agree that it’s not something I generally relish. However over the years I’ve gotten used to the process and found some things that work for me.

A simplified process

The whole process might go something like this (NB install yarn if you haven’t already).

# In a new project, start installing and managing 3rd-party packages.

# (only required if your project doesn’t already have a package.json)

yarn init # or npm init

# Install dependencies (in a project which already has a package.json)

yarn # or npm i

# Add a 3rd-party library to your project

yarn add package_name # or npm i package_name

# Add package as a devDependency.

# For tools only required in the local dev environment

# e.g. CLIs, hot reload.

yarn add -D package_name # or npm i package_name --save-dev

# Add package but specify a particular version or semver range

# https://devhints.io/semver

# It’s often wise to do this to ensure predictable results.

# caret (^) is useful: allows upgrade to minor but not major versions.

# is >=1.2.3 <2.0.0

yarn add package_name@^1.2.3

# Remove a package

# use this rather than manually deleting from package.json.

# Updates yarn.lock, package.json and removes from node_modules.

yarn remove package_name # or npm r package_name

# Update one package (optionally to a specific version/range)

yarn upgrade package_name

yarn upgrade package_name@^1.3.2

# Review (in a nice UI) all packages with pending updates,

# with the option to upgrade whichever you choose

yarn upgrade-interactive

# Upgrade to latest versions rather than

# semver ranges you’ve defined in package.json.

yarn upgrade-interactive -—latestResponding to a security vulnerability in a dependency

If you host your source code on GitHub it’s a great idea to enable Dependabot. Essentially Dependabot has your back with regard to any dependencies that need updated. You set it to send you automated security updates by email so that you know straight away if a vulnerability has been detected in one of your project dependencies and requires action.

Helpfully, if you have multiple Github repos and more than one of those include the vulnerable package you also get a round-up email with a message something like “A new security advisory on lodash affects 8 of your repositories” with links to the alert for each repo, letting you manage them all at once. Dependabot also works for a variety of languages and techologies—not just JavaScript—so for example in a Rails project it might email you to suggest bumping a package in your Gemfile.

Practical example

Let’s assume you’ve just recieved a security advisory alert regarding the minimatch package.

You can’t see any mention of minimatch in your repo’s package.json so the next thing to do is run the following:

npm ls minimatchThat’ll provide a friendly, condensed dependency tree view from which you’ll see that in your application, minimatch is a transitive dependency (a dependency of a dependency). Specifically, your direct dependencies 11ty and eslint both have a dependency on minimatch.

Assuming the fix version of the package is within the semver range you’ve already specified you can easily fix the issue with the following command:

npm update minimatchThis will update all the packages listed in your command to the latest version (specified by the tag config), respecting the semver constraints of both your package and its dependencies (if they also require the same package).

By default npm update will not update the semver values of direct dependencies in your project package.json. If you want to also update values in package.json you can run: npm update --save.

Automated updates

While it’s good to know how to do the above manually, note that for straightforward security fixes you can have Dependabot speed up and simplify the process for you. You can configure Dependabot to automatically open new Pull Requests to address vulnerabilities by updating the relevant dependency. It’ll give the PR a title like:

Bump

lodashfrom4.17.11to4.17.19

You just need to approve and merge that PR. This is great; it’s really simple and takes care of lots of cases.

Note 1: if you work on a corporate repo that is not set up to “automatically open PRs”, often you can still take advantage of Github’s intelligence with just one or two extra manual steps. Just follow the links in your Github security alert email.

Note 2: Dependabot can also be set to do automatic version updates even when your installed version does not have a vulnerability. You can enable this by adding a dependabot.yml to your repo. But so far I’ve tended to avoid unpredictability and excess noise by having it manage security updates only.

Gnarly manual updates

Sometimes Dependabot will alert you to an issue but is unable to fix it for you. Bummer.

This might be because the package owner has not yet addressed the security issue. If your need to fix the situtation is not super-urgent, you could raise an issue on the package’s Github repo asking the maintainer (nicely) if they’d be willing to address it… or even submit a PR applying the fix for them. If you don’t have the luxury of time, you’ll want to quickly find another package which can do the same job. An example here might be that you look for a new CSS minifier package because your current one has a longstanding security issue. Having identified a replacement you’d then remove package A, add package B, then update your code which previously used package A to make it work with package B. Hopefully only minimal changes will be required.

Alternatively the package may have a newer version or versions available but Depandabot can’t suggest a fix because:

- the closest new version’s version number is beyond the allowed range you specified in

package.jsonfor the package; or - Dependabot can’t be sure that upgrading wouldn’t break your application.

If the package maintainer has released newer versions then you need to decide which to upgrade to. Your first priority is to address the vulnerability, so often you’ll want to minimise upgrade risk by identifying the closest non-vulnerable version. You might then run yarn upgrade <package…>@1.3.2. Note also that you may not need to specify a specific version because your package.json might already specify a semver range which includes your target version, and all that’s required is for you to run yarn upgrade or yarn upgrade <package> so that the specific “locked” version (as specified in yarn.lock) gets updated.

On other occasions you’ll read your security advisory email and the affected package will sound completely unfamiliar… likely because it’s not one you explicitly installed but rather a sub-dependency. Y’see, your dependencies have their own package.json and dependencies, too. It seems almost unfair to have to worry about these too, however sometimes you do. The vulnerability might even appear several times as a sub-dependency in your lock file’s dependency tree. You need to check that lock file (it contains much more detail than package.json), work out which of your top-level dependencies are dependent on the sub-dependency, then go check your options.

Update: use yarn why sockjs (replacing sockjs as appropriate) to find out why a module you don’t recognise is installed. It’ll let you know what module depends upon it, to help save some time.

When having to work out the required update to address a security vulnerability in a package that is a subdependency, I like to quickly get to a place where the task is framed in plain English, for example:

To address a vulnerability in

xmlhttprequest-sslwe need to upgradekarmato the closest available version above4.4.1where its dependency onxmlhttprequest-sslis>=1.6.2

Case Study 1

I was recently alerted to a “high severity” vulnerability in package xmlhttprequest-ssl.

Dependabot cannot update

xmlhttprequest-sslto a non-vulnerable version. The latest possible version that can be installed is1.5.5because of the following conflicting dependency:@11ty/eleventy@0.12.1requiresxmlhttprequest-ssl@~1.5.4via a transitive dependency onengine.io-client@3.5.1. The earliest fixed version is1.6.2.

So, breaking that down:

xmlhttprequest-sslversions less than1.6.2have a security vulnerability;- that’s a problem because my project currently uses version

1.5.5(via semver range~1.5.4), which I was able to see from checkingpackage-lock.json; - I didn’t explicitly install

xmlhttprequest-ssl. It’s at the end of a chain of dependencies which began at thedependenciesof the package@11ty/eleventy, which I did explicitly install; - To fix things I want to be able to install a version of Eleventy which has updated its own dependencies such there’s no longer a subdependency on the vulnerable version of

xmlhttprequest-ssl; - but according to the Dependabot message that’s not possible because even the latest version of Eleventy (0.12.1) is indirectly dependent on a vulnerable version-range of

xmlhttprequest-ssl(~1.5.4); - based on this knowledge, Dependabot cannot recommend simply upgrading Eleventy as a quick fix.

So I could:

- decide it’s safe enough to wait some time for Eleventy to resolve it; or

- request Eleventy apply a fix (or submit a PR with the fix myself); or

- stop using Eleventy.

Case Study 2

A while ago I received the following security notification about a vulnerability affecting a side-project repo.

dot-prop < 4.2.1 “Prototype pollution vulnerability in dot-prop npm package before versions 4.2.1 and 5.1.1 allows an attacker to add arbitrary properties to JavaScript language constructs such as objects.

I wasn’t familar with dot-prop but saw that it’s a library that lets you “Get, set, or delete a property from a nested object using a dot path”. This is not something I explicitly installed but rather a sub-dependency—a lower-level library that my top-level packages (or their dependencies) use.

Github was telling me that it couldn’t automatically raise a fix PR, so I had to fix it manually. Here’s what I did.

- looked in

package.jsonand found no sign ofdot-prop; - started thinking that it must be a sub-dependency of one or more of the packages I had installed, namely

express,hbs,requestornodemon; - looked in

package-lock.jsonand via a Cmd-F search fordot-propI found that it appeared twice; - the first occurrence was as a top-level element of

package-lock.jsons top-leveldependenciesobject. This object lists all of the project’s dependencies and sub-dependencies in alphabetical order, providing for each the details of the specific version that is actually installed and “locked”; - I noted that the installed version of

dot-propwas4.2.0, which made sense in the context of the Github security message; - the other occurrence of

dot-propwas buried deeper within the dependency tree as a dependency ofconfigstore; - I was able to work backwards and see that

dot-propis required byconfigstorethen Cmd-F search forconfigstoreto find that it was required byupdate-notifier, which is turn is required bynodemon; - I had worked my way up to a top-level dependency

nodemon(installed version1.19.2) and worked out that I would need to updatenodemonto a version that had resolved thedot-propvulnerability (if such a version existed); - I then googled “nodemon dot-prop” and found some fairly animated Github issue threads between Remy the maintainer of

nodemonand some users of the package, culminating in a fix; - I checked nodemon’s releases and ascertained that my only option if sticking with

nodemonwas to installv2.0.3—a new major version. I wouldn’t ideally install a version which might include breaking changes but in this casenodemonwas just adevDependency, not something which should affect other parts of the application, and a developer convenience at that so I went for it safe in the knowledge that I could happily remove this package if necessary; - I opened

package.jsonand withindevDependenciesmanually updatednodemonfrom^1.19.4to^2.0.4. (If I was in ayarncontext I’d probably have done this at the command line). I then rannpm i nodemonto reinstall the package based on its new version range which would also update the lock file. I was then prompted to runnpm audit fixwhich I did, and then I was done; - I pushed the change, checked my Github repo’s security section and noted that the alert (and a few others besides) had disappeared. Job’s a goodun!

Proactively checking for security vulnerabilities

It’s a good idea on any important project to not rely on automated alerts and proactively address vulnerabilities.

Check for vulnerabilities like so:

yarn audit

# for a specific level only

yarn audit --level critical

yarn audit --level high Files and directories

When managing dependencies, you can expect to see the following files and directories.

package.jsonyarn.locknode_modules(this is the directory into which packages are installed)

Lock files

As well as package.json, you’re likely to also have yarn.lock (or package.lock or package-lock.json) under source control too. As described above, while package.json can be less specific about a package’s version and suggest a semver range, the lock file will lock down the specific version to be installed by the package manager when someone runs yarn or npm install.

You shouldn’t manually change a lock file.

Choosing between dependencies and devDependencies

Whether you save an included package under dependencies (the default) or devDependencies comes down to how the package will be used and the type of website you’re working on.

The important practical consideration here is whether the package is necessary in the production environment. By production environment I don’t just mean the customer-facing website/application but also the enviroment that builds the application for production.

In a production “build process” environment (i.e. one which likely has the environment variable NODE_ENV set to production) the devDependencies are not installed. devDependencies are packages considered necessary for development only and therefore to keep production build time fast and output lean, they are ignored.

As an example, my personal site is JAMstack-based using the static site generator (SSG) Eleventy and is hosted on Netlify. On Netlify I added a NODE_ENV environment variable and set it to production (to override Netlify’s default setting of development) because I want to take advantage of faster build times where appropriate. To allow Netlify to build the site on each push I have Eleventy under dependencies so that it will be installed and is available to generate my static site.

By contrast, tools such as Netlify’s CLI and linters go under devDependencies. Netlify’s build prorcess does not require them, nor does any client-side JavaScript.

Upgrading best practices

- Check the package CHANGELOG or releases on Github to see what has changed between versions and if there have been any breaking changes (especially when upgrading to the latest version).

- Use a dedicated PR (Pull Request) for upgrading packages. Keep the tasks separate from new features and bug fixes.

- Upgrade to the latest minor version (using

yarn upgrade-interactive) and merge that before upgrading to major versions (usingyarn upgrade-interactive -—latest). - Test your work on a staging server (or Netlify preview build) before deploying to production.

References

Jank-free Responsive Images

Here’s how to improve performance and prevent layout jank when browsers load responsive images.

Since the advent of the Responsive Web Design era many of us, in our rush to make images flexible and adaptive, stopped applying the HTML width and height attributes to our images. Instead we’ve let CSS handle the image, setting a width or max-width of 100% so that our images can grow and shrink but not extend beyond the width of their parent container.

However there was a side-effect in that browsers load text first and images later, and if an image’s dimensions are not specified in the HTML then the browser can’t assign appropriate space to it before it loads. Then, when the image finally loads, this bumps the layout – affecting surrounding elements in a nasty, janky way.

CSS-tricks have written about this several times however I’d never found a solid conclusion.

Chrome’s Performance Warning

The other day I was testing this here website in Chrome and noticed that if you don’t provide images with inline width and height attributes, Chrome will show a console warning that this is negatively affecting performance.

Based on that, I made the following updates:

- I added width and height HTML attributes to all images; and

- I changed my CSS from

img { max-width: 100%; }toimg { width: 100%; height: auto; }.

NB the reason behind #2 was that I found that that CSS works better with an image with inline dimensions than max-width does.

Which dimensions should we use?

Since an image’s actual rendered dimensions will depend on the viewport size and we can’t anticipate that viewport size, I plumped for a width of 320 (a narrow mobile width) × height of 240, which fits with this site’s standard image aspect ratio of 4:3.

I wasn’t sure if this was a good approach. Perhaps I should have picked values which represented the dimensions of the image on desktop.

Jen Simmons to the rescue

Jen Simmons of Mozilla has just posted a video which not only confirmed that my above approach was sound, but also provided lots of other useful context.

Essentially, we should start re-applying HTML width and height attributes to our images, because in soon-to-drop Firefox and Chrome updates the browser will use these dimensions to calculate the image’s aspect ratio and thereby be able to allocate the exact required space.

The actual dimensions we provide don’t matter too much so long as they represent the correct aspect ratio.

Also, if we use the modern srcset and sizes syntax to offer the browser different image options (like I do on this site), so long as the different images are the same aspect ratio then this solution will continue to work well.

There’s no solution at present for the Art Direction use case – where we want to provide different aspect ratios dependent on viewport size – but hopefully that will come along next.

I just tested this new feature in Firefox Nightly 72, using the Inspector’s Network tab to set “throttling” to 2G to simulate a slow-loading connection, and it worked really well!

Lazy Loading

One thing I’m keen to test is that my newly-added inline width and height attributes play well with loading="lazy". I don’t see why they shouldn’t and in fact they should in theory all support each other well. In tests so far everything seems good, however since loading="lazy" is currently only implemented in Chrome I should re-test images in Chrome once it adds support for the new image aspect ratio calculating feature, around the end of 2019.

Beyond Automatic Testing (matuzo.at)

Six accessibility tests Viennese Front-end Developer Manuel Matusovic runs on every website he develops, beyond simply running a Lighthouse audit.

Includes “Test in Grayscale Mode” and “Test with no mouse to check tabbing and focus styles”.

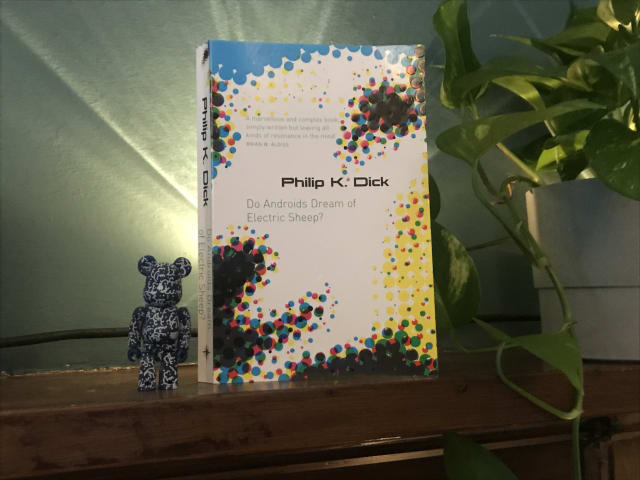

Do Androids Dream of Electric Sheep? by Philip K. Dick

In my ongoing quest to catch up on books I should have read years ago, I recently finished reading “Do Androids Dream of Electric Sheep?” – the book on which Bladerunner was based.

I’m a big Bladerunner fan – and also loved last year’s follow up movie, Bladerunner 2049 – but for me, the book was a mixed bag.

It illuminated some of the parts of the film that in retrospect hadn’t fully sunk in – like why animals (such as Tyrell Corp’s owl) were so important in post-apocalyptic San Fransisco, due to most species being endangered or extinct.

It also went much deeper into the question of whether empathy and similar social emotions were solely human abilities or could be felt by androids too. And I can see now see why this was explored further with lead character Joe in Bladerunner 2049.

On the downside, the techno-religious angle of Mercerism didn’t really work for me – although maybe I didn’t pick up properly on the metaphor. Also, I was kind of disappointed to learn that there actually is an electric sheep in the story! I had always loved the title and thought it was just a really clever reference to counting sheep in your dreams, rather than something so literal. Never mind!

All of the downsides were worth it, however, for the detailed parts about Voight-Kampff empathy tests. I have always loved the ideas and language around this, such as measuring androids for their Blush Response and other signs of empathy when asked questions which would normally elicit a reaction in humans.

All in, I’m glad I read it to gain the additional background to the movies.

I’m now off to watch Bladerunner again.

Original 1979 copies of Garden of Eden’s Everybody’s on a Trip regularly sell for £200+ so I was pretty happy to hear that it had just been reissued on Backatcha records… and even happier when I managed to snag a copy.

I first heard this stellar slice of deep funk a few years back on Kon and Amir’s compilation Off Track Volume One: The Bronx, and have been hankering after a proper copy ever since.

Check it out!

U.S. Supreme Court Favors Digital Accessibility in Domino’s Case

Digital products which are a public accommodation must be accessible, or will be subject to a lawsuit (and probably lose).

This US Supreme Court decision on October 7, 2019 represents a pretty favourable win for digital accessibility against a big fish that was trying to shirk its responsibilities.

Replicating Jekyll’s markdownify filter in Nunjucks with Eleventy

Here, Ed provides some handy code to convert a Markdown-formatted string into HTML in Nunjucks via an Eleventy shortcode.

This performs the same role as the markdownify filter in Jekyll.

I’m now using it on this site in listings, using the shortcode to convert blog entry excerpts written in markdown (which might contain code or italics, etc) into the target HTML.